Analogue Computing Shapes the Artificial Intelligence (AI) Revolution

By Dr. Kasun Subasinghage

Summary

Analogue computing is emerging as a promising solution to meet the growing demands of the AI revolution. In contrast to digital computers, which are constrained by the Von Neumann bottleneck and higher power consumption, analogue systems perform real-time calculations using continuous physical quantities, thereby eliminating data transfer limitations. Innovations such as phase-change memory enhances the efficiency and scalability of analogue processors, enabling their use in applications such as autonomous vehicles, robotics, and edge AI. Despite challenges such as noise sensitivity and limited programmability, hybrid analogue-digital architectures and advancements in materials will create a promising future for analogue computing in AI.

Keywords

Analogue Computing, Artificial Intelligence, Energy Efficiency, Von Neumann Bottleneck

Content

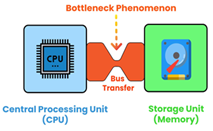

Figure 1: The data transfer between the CPU and the memory of a digital computer [3].

Figure 1: The data transfer between the CPU and the memory of a digital computer [3].

We are living in a digital age where electronic devices use binary digits (1s and 0s) to process information [1]. Digital computers are more precise, versatile and easy to program with software. They are designed based on two architectures: Von Neumann and Harvard, where the Von Neumann architecture is foundational to most digital computers due to its simplicity, cost-effectiveness and flexibility [2]. Due to the growth of AI applications, faster Central Processing Units (CPUs) and more memory are required in digital computers. Figure 1 shows the process of binary data transfer between the CPU and the memory of a digital computer [3]. However, due to the major limitation of the Von Neumann architecture known as the 'Von Neumann bottleneck’, the data transfer rate between the CPU and memory becomes a limiting factor, hindering processing speed and efficiency [4]. In addition, according to Moore’s law, the number of transistors that can fit on a computer chip has doubled nearly every two years; hence, digital computer circuitry will eventually reach a point where transistor size cannot be reduced further [5]. The higher energy consumption of digital computers when running AI algorithms is another disadvantage.

Figure 2: Heath Company’s Heathkit EC-1 educational analogue computer [6].

Figure 2: Heath Company’s Heathkit EC-1 educational analogue computer [6].

The counterpart of the digital computer is the analogue computer, which uses continuous physical quantities, such as voltage or current, to represent data and perform real-time calculations, making it ideal for scientific simulations and control systems [2, 5]. Figure 2 shows the Heath Company’s Heathkit EC-1, an educational analogue computer built in 1960 [6]. It was programmed using patch cords that connected nine operational amplifiers and other components [6]. Although analogue computers dominated during the mid-20th century, these were eventually overshadowed by the rise of digital computers, which offered higher precision and greater programmability.

Figure 3: M1076 Analogue Matrix Processor from Mythic [8].

Figure 3: M1076 Analogue Matrix Processor from Mythic [8].

The advancements in AI have revived interest in analogue computers, leading to the development of modern analogue processors designed specifically to train and test AI and machine learning models. These chips have demonstrated their potential in a wide variety of applications, such as autonomous vehicles and robotics, over the past few years. They can process data without shuttling between the CPU and memory units, thereby eliminating the Von Neumann bottleneck of traditional digital computing and significantly increasing computation speed [5]. In addition, analogue processors use very little power, and they are suitable for edge devices, enabling AI computations near the data source with no need for centralised cloud services. Analogue processors can mimic the neural structure of the human brain to perform complex pattern recognition and learning tasks more effectively compared to conventional digital systems. An example is IBM's research on analogue AI chips that utilise Phase-Change Memory (PCM) devices to provide computations at the location of data storage, effectively solving the Von Neumann bottleneck [7]. Moreover, analogue AI processors, such as M1076 built by Mythic (shown in Figure 3), allow computation to happen directly inside memory arrays against known weights, resulting in fast and more power-efficient data processing [8]. The deployment of this technology is expected to grow, as manufacturers enhance their designs and ramp up manufacturing of small, robust analogue computers and processors.

Despite their potential, analogue processors face challenges such as precision and noise sensitivity. They are typically designed to perform specific types of calculations and cannot be programmed to perform other functions [2]. Hybrid architectures that combine analogue computation with digital correction mechanisms are emerging as a solution [9]. Scaling analogue processors for large-scale applications also remains a challenge, but ongoing innovations in materials and circuit design are promising.

Conclusion

Analogue computing holds the key to revolutionising AI by unlocking unparalleled efficiency and speed. As innovation pushes boundaries, this technology promises not just to complement but to redefine how we approach intelligent systems, paving the way for a smarter, greener future.

References

[1] Max G. Levy and M. Moyer. (2024) What Is Analog Computing? The Quanta Newsletter.

[2] "Difference between Von Neumann and Harvard Architecture." GeeksforGeeks. https://www.geeksforgeeks.org/difference-between-von-neumann-and-harvard-architecture/ (accessed 01 June 2025).

[3] K. Priyadarshi. "How in-memory Computing Could be a Game Changer in AI." techovedas. https://techovedas.com/how-in-memory-computing-could-be-game-changer-in-ai/ (accessed 08 June 2025).

[4] J. Hertz. "AI Chip Strikes Down the von Neumann Bottleneck With In-Memory Neural Net Processing." EETech Group. https://www.allaboutcircuits.com/news/ai-chip-strikes-down-von-neumann-bottleneck-in-memory-neural-network-processing/ (accessed 12 June 2025.

[5] G. Siviour. "Why AI and other emerging technologies may trigger a revival in analog computing."KyndrylInc.https://www.kyndryl.com/us/en/perspectives/articles/2024/03/analog-computing-renaissance (accessed 13 June 2025).

[6] "HeathkitEC-1." The Computer Museum. https://museum.syssrc.com/artifact/exhibits/252/ (accessed 16 June 2025).

[7] "Analog AI:Making Deep Neural Network systems more capable and energy-efficient." IBM. https://research.ibm.com/projects/analog-ai (accessed 18 June 2025).

[8] "M1076 Analog Matrix Processor." Mythic. https://mythic.ai/products/m1076-analog-matrix-processor/ (accessed 22 June 2025).

[9] "What is a hybrid computer?" Lenovo. https://www.lenovo.com/us/en/glossary/hybrid-computer/?orgRef=https%253A%252F%252Fwww.google.com%252F&srsltid=AfmBOooPDa9cwb4ZvcHub6kKbZBnA6b_MSV_LAM5v3X2H1xnvgPPMwkW (accessed 13 June 2025).

Dr. Kasun Subasinghage is a Senior Lecturer in the Department of Materials and Mechanical Technology at the Faculty of Technology, University of Sri Jayewardenepura, specialising in Mechatronics. He earned his Ph.D. from Auckland University of Technology, New Zealand, in 2019, following his M.Sc. and B.Sc. (Hons) in Electrical Engineering from the University of Moratuwa, Sri Lanka. His research focuses on the design and implementation of smart systems, supercapacitor-assisted systems and wireless communication technologies for industrial and applied mechatronic applications.

Dr. Kasun Subasinghage is a Senior Lecturer in the Department of Materials and Mechanical Technology at the Faculty of Technology, University of Sri Jayewardenepura, specialising in Mechatronics. He earned his Ph.D. from Auckland University of Technology, New Zealand, in 2019, following his M.Sc. and B.Sc. (Hons) in Electrical Engineering from the University of Moratuwa, Sri Lanka. His research focuses on the design and implementation of smart systems, supercapacitor-assisted systems and wireless communication technologies for industrial and applied mechatronic applications.